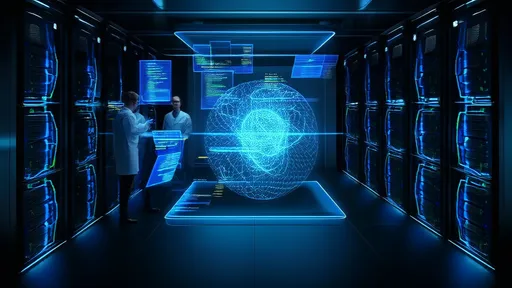

For decades, paralysis has confined individuals to the limitations of their physical bodies, but a groundbreaking fusion of brain-computer interface (BCI) technology and virtual reality (VR) is now rewriting the rules. In a landmark achievement, researchers have enabled paralyzed patients to navigate a digital world—walking, interacting, and even experiencing a sense of agency in the metaverse. This leap forward isn’t just about technological spectacle; it’s a profound restoration of autonomy for those who’ve long been denied it.

The experiment, led by a collaborative team of neuroscientists and engineers, involved patients with spinal cord injuries using non-invasive BCI headsets to control avatars in a VR environment. By decoding neural signals associated with movement intention, the system translated thoughts into virtual motion. One participant, immobilized for over five years, described the experience as "like waking up from a long dream—suddenly, my legs were mine again." The emotional weight of such moments underscores the potential of this technology to transcend physical constraints.

Unlike traditional VR, which relies on handheld controllers or body tracking, this approach bypasses the body entirely. Electroencephalography (EEG) caps record brain activity as users imagine walking or reaching, while machine learning algorithms interpret these patterns in real time. The result is a seamless, intuitive control system that feels less like operating a tool and more like an extension of the self. Researchers emphasize that the metaverse, often criticized as a playground for the able-bodied, could become a sanctuary for mobility-impaired individuals—a space where social and physical barriers dissolve.

Critics have questioned whether virtual mobility translates to tangible psychological benefits. Yet preliminary findings suggest profound impacts: participants reported reduced feelings of depression and isolation after just weeks of regular VR sessions. One study noted a 40% increase in self-reported quality-of-life metrics, with patients expressing renewed hope for future therapies. The metaverse, in this context, isn’t an escape from reality but a bridge to reclaiming parts of life once deemed lost.

Ethical considerations loom large, however. As corporations race to monetize the metaverse, advocates warn against treating assistive technologies as niche markets rather than universal rights. "This isn’t about selling headsets," insists Dr. Elena Voss, a bioethicist involved in the project. "It’s about ensuring equitable access to technologies that restore fundamental human experiences." The team has open-sourced key components of their BCI software, a move aimed at democratizing innovation while pressuring profit-driven platforms to prioritize inclusivity.

The road ahead is fraught with challenges. Current systems require extensive calibration and struggle with complex movements like climbing stairs or dancing. Bandwidth limitations of EEG also mean that finer gestures—say, typing or playing a virtual piano—remain elusive. Some researchers advocate for hybrid approaches, combining BCI with residual muscle signals or eye tracking. Others push for invasive implants, which offer higher precision but carry surgical risks. The debate reflects a broader tension in neurotechnology: balancing immediacy with safety, ambition with accessibility.

What’s undeniable is the symbolic power of seeing a paralyzed individual "walk" alongside others in a shared digital space. Social VR platforms experimenting with this technology report unexpected outcomes: able-bodied users adjusting their behaviors to match the pace of BCI users, spontaneous collaborations in virtual workplaces, even romantic relationships blossoming where physical limitations might have deterred connection. The metaverse, often derided as isolating, may ironically become humanity’s most inclusive public square yet.

As the project scales, its architects envision a future where the lines between therapy and daily life blur. Imagine BCI-VR systems integrated into smart homes, allowing users to "rise" from bed to open curtains via avatar, or attend family gatherings as a fully mobile digital presence. The implications for telehealth are equally staggering—a neurologist in Berlin could guide a patient in Buenos Aires through rehabilitation exercises within the same virtual clinic. Such scenarios hinge on global cooperation to standardize protocols and safeguard neural data, a frontier as legally untested as the technology itself.

For now, the team’s focus remains on refining the emotional resonance of the experience. Early iterations prioritized functional movement, but user feedback revealed deeper yearnings: the crunch of virtual gravel underfoot, the ability to look down and see a body that responds, the catharsis of running after years of stillness. Next-generation environments will incorporate haptic feedback suits and olfactory stimuli to deepen immersion. "It’s not enough to move," explains lead developer Marcus Yee. "We’re engineering joy."

The project’s most poignant moment came unexpectedly during a stress test. A participant navigating a virtual park encountered a steep hill—an obstacle the team hadn’t anticipated. Instead of frustration, they witnessed quiet determination as the user leaned forward in their chair, brainwaves firing wildly until their avatar conquered the incline. Later, they confessed: "For the first time since my accident, I felt tired in the best possible way." In that exhaustion lay victory, a reminder that even in synthetic worlds, the human spirit remains indomitable.

By /Aug 14, 2025

By /Oct 20, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Oct 20, 2025

By /Aug 14, 2025

By /Oct 20, 2025

By /Aug 14, 2025

By /Oct 20, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Oct 20, 2025

By /Aug 14, 2025

By /Aug 14, 2025